In a quiet corner of medical research, a handful of scientists just watched their timelines collapse from years to months.

They set out to test whether artificial intelligence could really take over the most tedious parts of data science. What followed was a controlled experiment that hints at a radical change in how quickly medical discoveries might reach patients.

From months of coding to a few minutes of AI work

Behind almost every modern medical study sits a mountain of data. Before anyone can ask meaningful questions, that data needs cleaning, structuring and feeding into predictive models. This stage routinely drags on for months and often decides how quickly a project moves, or stalls.

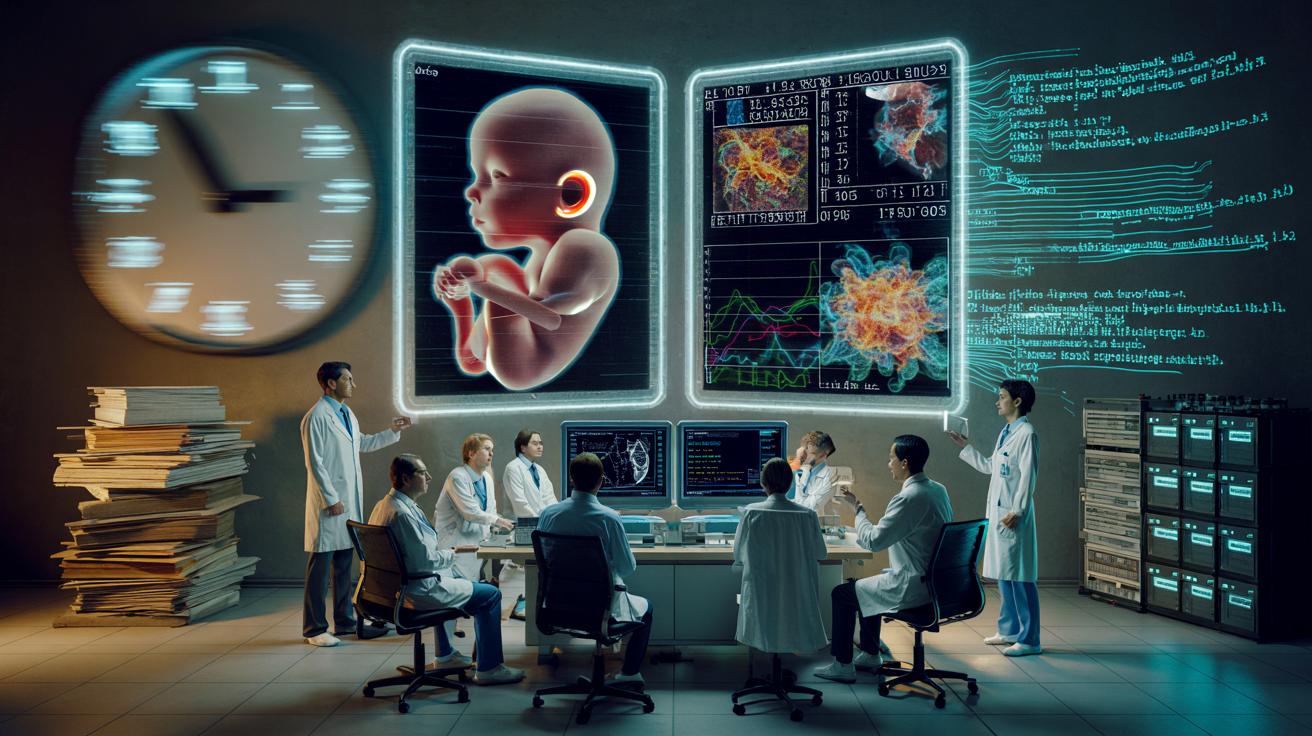

Researchers from the University of California, San Francisco, and Wayne State University decided to put this bottleneck under a microscope. They took a real clinical challenge—predicting premature births from pregnancy data—and ran a head-to-head contest: human teams versus AI systems asked to generate code and build predictive models.

Both sides received the same raw information: microbiological data from more than 1,000 pregnancies, drawn from several previous studies. The task was to use those data to estimate which pregnancies were most likely to end too early.

Where experienced programmers might spend several hours or days writing and refining code, some AI tools produced workable scripts in minutes.

This speed-up did not only apply to senior experts. In one striking case, a pair consisting of a master’s student and a high school pupil managed to create credible models with AI assistance. Freed from the slow grind of technical setup, they could move quickly to checking results, interpreting them and preparing a manuscript for a scientific journal.

Why code generation matters in medicine

Medical datasets are often messy. Different hospitals use different formats, variable names and measurement standards. Turning this chaos into something a statistical model can use is usually the job of specialist data scientists.

AI code generators shift that balance. By transforming plain-language instructions into usable R or Python code, they can automate large parts of this pipeline:

- Importing and cleaning raw datasets

- Handling missing values and outliers

- Splitting data into training and validation sets

- Testing multiple modelling approaches

- Producing performance metrics and basic plots

For researchers without deep programming expertise, this removes one of the most intimidating barriers. For experienced teams, it means less time on repetitive tasks and more on the scientific questions that actually matter.

➡️ A hidden tunnel has linked Earth to distant stars for millions of years

➡️ A gigantic buried block beneath Hawaii could explain the stability of volcanic hotspots

➡️ Brain rejuvenation is measurable in adults who move more

➡️ When an oral infection sneaks into cancer development

➡️ A study suggests cats can develop a form of dementia similar to Alzheimer’s

➡️ This diabetes drug might actually slow down time itself

➡️ Physical exercise: a remedy as effective as medication against depression

➡️ Human longevity depends as much on our genes as on our environment

The medical stakes: premature birth as a test case

The experiment was never just a coding challenge. Premature birth is the leading cause of neonatal death worldwide and a major source of long-term motor and cognitive disabilities. In the United States alone, close to 1,000 babies are born too early every day.

Earlier and more accurate prediction could change clinical decisions. High‑risk pregnancies might benefit from closer monitoring, preventive treatments, or delivery in better equipped hospitals. Late recognition, by contrast, leaves teams reacting rather than planning.

To push their models, the researchers pooled microbiome data from roughly 1,200 pregnant women across nine different studies. This kind of meta-analysis reflects a broader trend: instead of one hospital’s data, projects now span continents. Traditional methods struggle to capture all the patterns hidden in such huge, diverse datasets.

Dozens of international teams had already worked on the same pregnancy data in previous challenges, and many needed about three months to build their models.

Once those human-led models were complete, another clock started ticking. Gathering the results, comparing methods, drafting manuscripts and passing peer review took almost two years. The science did not stand still, but the translation of findings into usable knowledge moved slowly.

When generative AI runs the full pipeline

In the new study, the researchers went a step further. They asked eight different AI systems to build predictive models from the pregnancy data with no human writing the code. Four of the eight managed to produce complete, executable pipelines.

The performance of those AI-driven models held up surprisingly well. In several cases, their accuracy matched or even exceeded the best human teams from earlier international competitions. The researchers also showed that some large language models could generate the entire workflow—from importing data to evaluating results—within a single conversation.

The entire project, from first idea to journal submission, was wrapped up in six months rather than the years seen in comparable efforts.

That time compression may reshape daily life in research labs. Instead of wrestling for weeks with bugs and formatting errors, scientists can run more hypotheses in parallel, reject weak ones quickly and refine the promising leads.

What AI changes for medical researchers

The study points toward a shift in how medical data work is divided between humans and machines. AI systems take on:

| Task | Primarily handled by |

|---|---|

| Code generation and formatting | AI tools |

| Routine data cleaning and transformation | AI, with human checks |

| Choice of clinical questions | Human experts |

| Interpretation of model outputs | Clinicians and statisticians |

| Ethical and safety decisions | Regulators and medical teams |

The promise is not simply doing the same science faster. Shorter feedback loops can change which projects are even attempted. A hospital research group might once have avoided complex modelling because they lacked in‑house programmers. With code‑writing AI, those groups can at least try a pilot analysis.

Faster iteration also encourages more rigorous testing. When a model is painful to rebuild, teams hesitate to run alternate scenarios. If the rebuild takes minutes, sensitivity checks and external validations become much more realistic.

Limits, blind spots and the need for human judgment

The researchers are quick to point out that the tools are far from flawless. Half of the AI systems tested failed to generate fully usable code. Others produced scripts that ran but contained subtle errors or odd modelling choices.

There is a real risk that an impressive‑looking pipeline hides a mistake: a mislabelled variable, a biased sample split or an incorrect handling of missing data. Without a trained eye, such glitches can go unnoticed and skew clinical conclusions.

AI can write code, but it still cannot take responsibility for a wrong diagnosis or a flawed study.

Human expertise remains central at three stages. First, framing the question: deciding which outcome to predict and which variables to include needs clinical understanding. Second, quality control: statisticians and domain experts must review both code and results. Third, communication: translating model outputs into advice that doctors can use at the bedside requires nuance that machines do not yet have.

Key terms that shape the debate

Several technical expressions appear often in this debate and shape how non‑specialists understand the stakes:

- Predictive model: a mathematical tool that estimates the probability of a future event, such as premature birth, based on current data.

- Pipeline: the full sequence of steps that takes raw data to a final result, including cleaning, modelling and evaluation.

- Generative AI: systems that can create content—code, text, images—when prompted in everyday language.

- Microbiome data: information about the communities of bacteria and other microbes living in or on the human body, often linked to health outcomes.

What this might look like in real hospitals

Imagine a maternity ward linked to a regional data hub. As new pregnancy check‑ups are logged, an AI‑generated model quietly updates individual risk scores in the background. Clinicians see an alert when a patient’s profile shifts into a higher‑risk band, prompting a closer review or a specialist referral.

Behind that seemingly simple alert lies a constantly evolving model, retrained overnight as new cases arrive. Maintaining such systems by hand would be nearly impossible. With AI handling much of the coding, teams can adjust variables, add new types of data—such as genetic markers or wearable sensor readings—and rerun analyses without restarting from zero.

There are obvious safeguards to consider: strict privacy rules, external audits of model fairness, and clear protocols to prevent over‑reliance on automated scores. Yet the same infrastructure could extend beyond pregnancy. Similar pipelines could flag early signs of sepsis, detect potential drug interactions or identify patients slipping through follow‑up schedules after surgery.

Balancing speed, risk and opportunity

The acceleration shown in the premature birth study brings both benefits and pressures. Regulators will face more frequent submissions for novel algorithms, all claiming clinical value. Journals may need new standards to evaluate research in which machines have written large parts of the codebase.

For patients, the stakes are simple. If AI‑assisted research can convert raw data into solid evidence faster, treatments and preventive strategies may reach clinics sooner. The challenge is ensuring that the rush for speed does not sideline careful checking, transparency and trust—elements that machines cannot shortcut, no matter how quickly they write the code.